Recent advancements in technology have made it harder to keep track of what’s real and fake online.

One example of that is the increased use of deep fakes – videos or images of a person in which their face or body has been digitally altered so that they appear to be someone else. It’s most often used maliciously to spread false information.

But a new tool is trying to combat this problem…

Finding the fakes

The new tool was designed by lead author Siwei Lyu and a team of researchers. It’s shown to be 94% effective in identifying portrait-like photos in experiments.

This tool works with the reflection in the eye to figure out if the image is real or not.

Lyu, a professor in the Computer Science and Engineering department at the University at Buffalo, stated:

“The cornea is almost like a perfect semi-sphere and is very reflective. So, anything that is coming to the eye with a light-emitting from those sources will have an image on the cornea.”

When we look at something, it’s reflected in our eyes. The reflected image would normally appear to be the same shape and color… but deep fakes and other images made by AI often fail to accurately capture this.

The tool uses this failure to find small deviations in the reflected light in the eyes of the fake images.

How the tool works its magic

For the experiment, researchers collected real images from Flickr Faces-HQ and fake images from www.thispersondoesnotexist.com – a database of fake AI-generated faces that appear real.

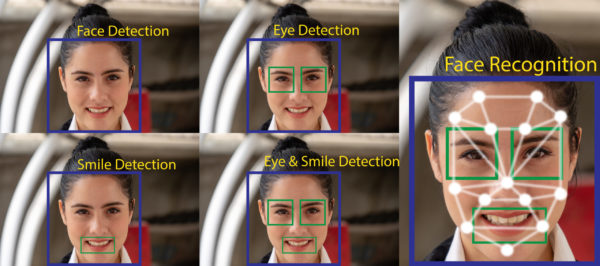

In the experiment, the team gathered real and fake images that were portrait-like with good angles and lighting. Then the tool mapped out each face, examined the eyes, and the light reflected in each eyeball.

It was able to compare in extreme detail the differences in light, shape, and other features of the reflected light.

While it all sounds promising, it still has some limits on what it can and can’t do….

The problems

The first issue is that a reflected source of light is required.

Second, mismatched light reflections of the eyes can be edited by deep fakers.

Third, the method only looks at each individual pixel that’s reflected in the eyes, not the shape of the eye or the nature of what’s being reflected.

And lastly, the method needs the reflections within both eyes – if the second eye is not visible then it won’t work accurately.

Lyn has been researching machine learning and computer vision projects for over 20 years and believes this tool is important in preventing the spread of misinformation.

The paper on this experiment has been accepted at the IEEE International Conference on Acoustics, Speech and Signal Processing that was held in Toronto, Canada.

What do you think needs to be done to prevent the spread of deep fakes online? Should more people be aware of the impact technology has on our opinions? Let us know down below!

I have smoked since I was 18 ! I am now 70 & would like to stop .

This Technology originally started many years ago ! Now it has drastically improved

And is being used for the past few few years with the advent of zCOVID and the players involved!

People are not aware of the FAKE PLAYERS IN THE GOVERNMENT ROLLS! Which ones are MASKED AND WHICH ONES ARENT

SOON THIS FORM OF Masquerade WILL BE Revealed !AND ITS SOONER THEN WHAT WE THINK! When this happens it will have millions of people in In “augh” and will make believers of the doubters many will say I told you so That were called conspiracy theorist!!